The 2026 Efficiency Pivot

The 2026 Efficiency Paradox: High-power AI and Rails 8 are removing tech "plumbing," letting devs focus on intent over complexity.

How Claude 4.6 and Rails 8 Are Killing the "Plumbing" of Tech

For years, the "AI Revolution" felt like a tax on our cognitive bandwidth. To gain a marginal increase in productivity, developers and strategists were forced to manage sprawling tech stacks, navigate siloed assistants, and fight a constant war against models that refused to follow complex instructions. We were promised efficiency, but we inherited complexity.

In 2026, the signal has finally separated from the noise. We have entered the era of the Efficiency Paradox: a definitive shift where "more" context and "more" power finally require significantly less effort and fewer external dependencies. Leading this charge is the simultaneous maturity of Anthropic’s Claude 4.6 and the Rails 8 "Solid" revolution.

Here is why 2026 is the year we stopped building plumbing and started building products.

1. The "Sonnet Flip": Flagship Intelligence as a Mid-Tier Commodity

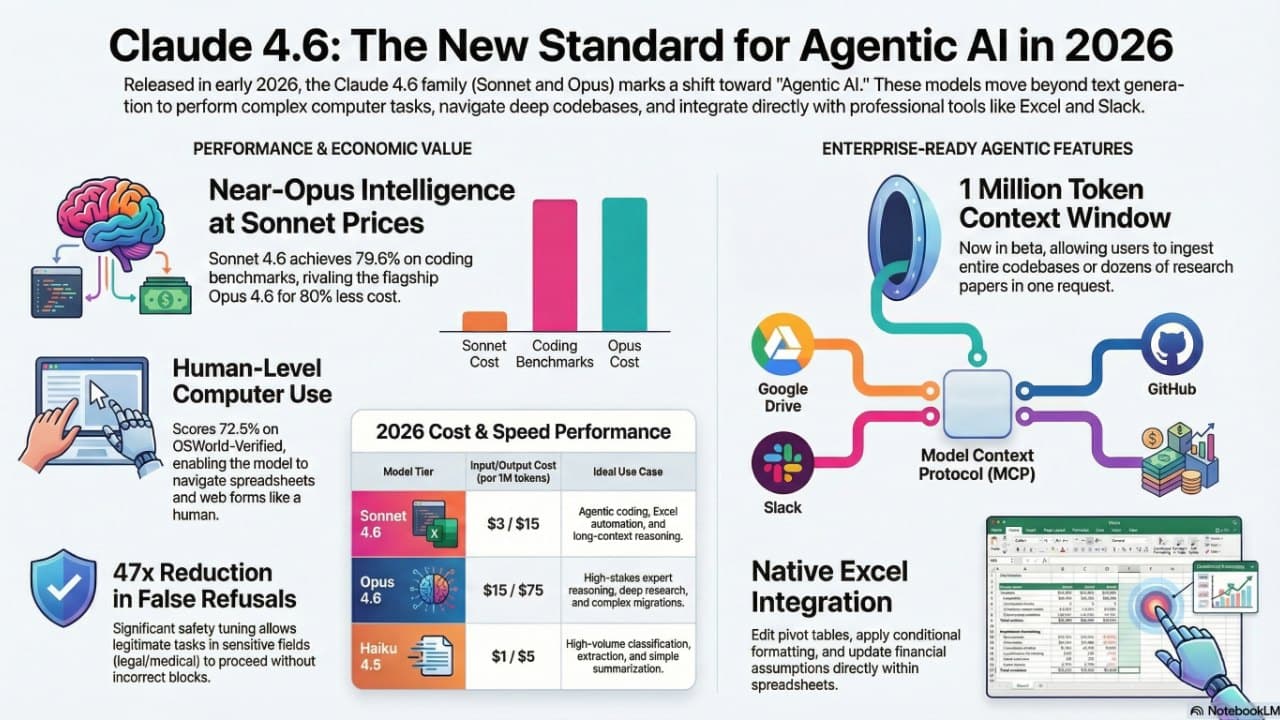

The most jarring economic signal this year is the "Sonnet Flip." Claude Sonnet 4.6 has effectively achieved parity with the "Opus" flagship models of the previous generation, transforming top-tier intelligence into a commodity.

The pricing-to-performance ratio has shifted radically. While Opus 4.6 remains the choice for expert-level synthesis at $15 per million input tokens and $75 per million output, Sonnet 4.6 delivers nearly identical performance for agentic coding and computer-use tasks at just $3 / $15. Perhaps more telling is that in blind "human preference" tests for coding, Sonnet 4.6 outperformed previous flagships 59% of the time, largely due to a massive reduction in "lazy" code omissions.

One technical shift to watch: Claude 4.6 now uses "thinking tokens" for its reasoning process. These are billed at the same rate as output tokens, meaning the "cost of thinking" is now a transparent line item in every developer's budget.

2. Computer Use Hits the Reliability Threshold

If 2024 was the year of the prototype, 2026 is the year of the deployment. Anthropic’s "Computer Use" feature has moved from a novelty to a reliable enterprise tool.

- OSWorld-Verified Scores: Sonnet’s score jumped from 61.4% in version 4.5 to 72.5% in 4.6, crossing the threshold required for autonomous business process automation.

- Security & Resilience: In "Best-of-N" jailbreak and prompt injection attempts, Claude 4.6’s failure rate plummeted from 49.36% to a negligible 0.51%.

This reliability allows Claude to navigate legacy systems, procurement portals, and internal dashboards that lack APIs—effectively turning any software with a UI into a programmable endpoint.

3. The 1-Million Token Context: Long-Horizon Planning

The expansion of the context window to 1 million tokens for Sonnet 4.6 isn't just about "reading more books." It’s about "long-horizon planning." In the "Vending-Bench Arena" experiment, Claude 4.6 was able to reason through multi-step economic strategies that unfolded over months of simulated time, investing in infrastructure early to maximize late-stage profitability.

For the enterprise, this means a model can hold an entire codebase, a year’s worth of Jira tickets, and the full architectural documentation in memory simultaneously. However, efficiency comes with a caveat: the "Long Context Premium." Crossing the 200K input token threshold triggers a higher pricing tier ($6 per million input / $22.50 per million output), forcing developers to be strategic about what they "remind" the AI of.

4. MCP: Live Feeds Over Static Libraries

The Model Context Protocol (MCP) has become the universal bridge. Instead of manually uploading PDFs or copy-pasting code snippets, MCP allows Claude 4.6 to "plug into" live data silos. Whether it’s a Slack thread, a GitHub repo, or a Google Drive folder, the AI now treats external data as a live extension of its own memory. This removes the "stale data" problem that plagued early AI integrations.

5. Rails 8 and the "Solid" Revolution: Killing the Infrastructure Tax

While Claude is automating the "thinking," Rails 8 is automating the "infrastructure." For a decade, web development required a complex web of dependencies: Redis for background jobs, Memcached for caching, and expensive hosting for simple apps.

Rails 8 has declared war on this complexity with the "Solid" trifecta:

- Solid Queue: Background processing that runs on your database.

- Solid Cache: Caching that stays local.

- Solid Cable: Real-time communication without the Redis tax.

By making SQLite production-ready (using WAL mode and "NORMAL" synchronous settings), Rails 8 allows a developer to host a high-traffic application on a single $5/month VPS using Kamal 2 for deployment.

For the strategist, the takeaway is clear: Rails 8 minimizes infrastructure management so you can spend your newfound cognitive bandwidth on managing AI agents. As one developer noted, there is a distinct "joy" in building web applications the "old-fashioned way" when the framework handles the complexity.

6. Solving the Over-Refusal Crisis

For professionals in healthcare, legal, and security, the greatest hurdle has been the "over-refusal" crisis—where models would block legitimate requests because they sounded "sensitive."

Sonnet 4.6 has nearly eliminated this friction. For "higher-difficulty benign prompts"—the ambiguous, technical requests professionals actually make—the refusal rate has dropped from 8.5% to a mere 0.18%. This 47x reduction means the AI can finally be a reliable partner in high-stakes fields without the dreaded "The computer says no" response.

7. Conclusion: The Age of the Self-Sufficient Developer

The combined force of agentic AI, massive context windows, and framework simplification is fundamentally changing the economics of software development. We are no longer in the business of managing "plumbing"—configuring queues, setting up caches, or wiring data silos.

The development cycle is shifting from managing complexity to managing intent. As the manual labor of tech is automated or stripped away by "Solid" architecture, a final, provocative question remains:

How will you reallocate the hours saved now that the "plumbing" of your work is finally handling itself?

Comments

Sign in with Google or GitHub to comment.