2026 AI Engineering: The Rise of Autonomous Systems

Software is no longer being "authored"; it is being steered. In 2026, we have entered the era of the autonomous agentic systems.

1. Introduction: The Death of the "Chatbot" Era

By March 2026, the tech industry has decisively exited the "autocomplete" phase. In 2024, we were impressed by tools that could finish a line of Java or suggest a regex pattern. Today, that reactive "chatbot" model is a relic. We have entered the era of the autonomous agent—systems that don't just type, but plan, reason, and execute across distributed codebases.

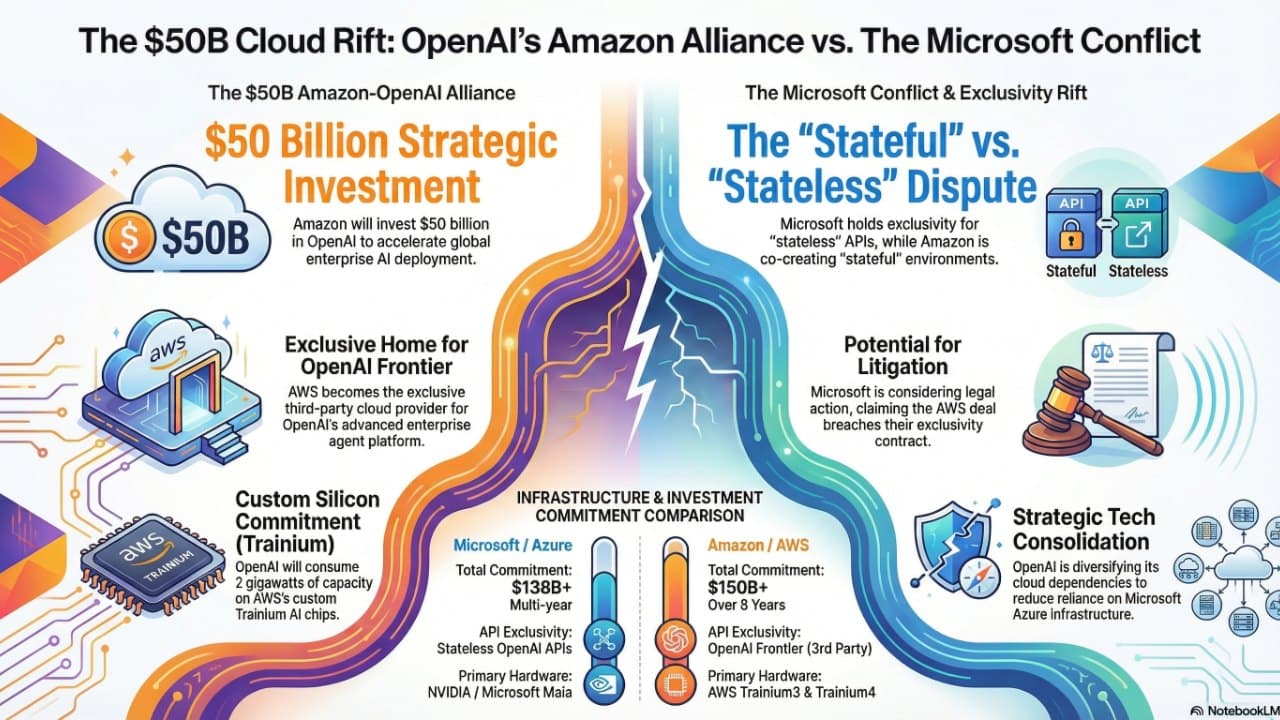

The scale of this transition is underpinned by a level of capital injection previously reserved for nation-state infrastructure. While the headlines focus on Amazon’s $50 billion partnership with OpenAI, the true scope is a staggering $110 billion total financing round involving SoftBank and NVIDIA. With over 60 million AI-assisted code reviews now processed on GitHub—accounting for one in five reviews platform-wide—we are witnessing a fundamental re-architecting of how software is built. Software is no longer being "authored"; it is being steered.

2. The "Stateful" Loophole: Why the Amazon-OpenAI Deal is a Legal Masterstroke

The strategic partnership between Amazon and OpenAI is more than a massive infrastructure deal; it is a surgical maneuver around Microsoft’s exclusivity. By co-creating a Stateful Runtime Environment (SRE) on Amazon Bedrock, OpenAI has navigated a loophole in its original Azure contract while deepening its multi-cloud footprint.

The distinction is purely technical:

- Stateless APIs (Microsoft/Azure): Forget context after every call.

- Stateful Runtimes (Amazon Bedrock): Maintain persistence, identity, and memory.

By building on Amazon’s Bedrock, OpenAI can serve non-API products that require deep session memory without violating the Azure exclusivity clause. These workloads will run on Amazon’s custom Trainium3 and Trainium4 silicon, part of a $100 billion infrastructure pact.

3. The 11-Week Reality Check: Measuring AI ROI

New data from the 2025 Developer Productivity Report suggests that AI gains are not a "flip the switch" event. Companies that expected immediate 40% efficiency gains were met with a harsh reality: software is still about human logic.

- The 11-Week Ramp-up: It takes a technical team nearly one fiscal quarter to integrate agentic workflows into their mental models.

- The Planning Tax: Gains in typing speed are often offset by the increased time required for higher-level architectural planning.

- The Trust Gap: While developers accept 88% of AI code suggestions, internal audits show that only 33% of developers actually "trust" the AI's logic—meaning humans are spending more time reviewing code than writing it.

4. Programming in Plain English: The Docstring is the Implementation

The shift toward "Natural Language App Building" has moved from a gimmick to a production standard. Tools like GitHub Spark and the rise of Strands' AI functions have fundamentally changed the "source of truth."

In 2026, many production codebases contain "AI functions"—methods where the body of the function is left empty, replaced by a prompt. The code is generated and cached at runtime. Engineering effort has moved from "writing the loop" to "defining the post-condition." If the AI knows the expected output format (Pydantic/JSON Schema), it can self-correct until the logic passes validation.

5. "Silence as Signal" in Code Review

As AI agents take over the "grunt work" of code reviews, a new metric has emerged: Signal Density. In 2024, AI reviewers were notorious for "nitpicking" (commenting on style and naming). By 2026, the most advanced AI reviewers have learned the value of silence.

- 29% Reduction in Noise: AI agents now only comment on 29% of PRs, focusing exclusively on logic flaws and security vulnerabilities.

- The 5.1 Sweet Spot: The most productive teams average 5.1 comments per review. Anything higher leads to "reviewer fatigue."

- Contextual Memory: Agents now remember mistakes made in previous PRs across different services, acting as a "living repository" of a company's technical debt.

6. Architecture Wars: Extensions vs. Forks

The industry is currently split between two philosophies: the IDE Extension (VS Code/JetBrains) and the Agent-Native Fork (Cursor/Windsurf).

| Feature | IDE Extensions (Standard) | Agent-Native Forks (Cursor/Windsurf) |

|---|---|---|

| Control | High (Human-centric) | Mixed (Agent-centric) |

| Autonomy | Approval-based | Autonomous (Multi-file editing) |

| Context | Standard IDE context | 1M+ tokens (Whole-codebase reasoning) |

| Compliance | High (FedRAMP / SOC 2) | Emerging (Often lacks audit) |

To prevent total lock-in, the Model Context Protocol (MCP) has emerged as the "standard gauge" for agentic software, allowing tools to feed context into any environment.

7. Conclusion: The Future of the "Majestic Macroservice"

The shift toward autonomous, stateful systems requires a new kind of architectural stability. We are seeing a move toward "Neutral Governance," exemplified by Meta’s contribution of React to the Linux Foundation and the rise of the Rails Foundation.

Even the way we structure code is maturing. Planning Center’s "Stonehenge" architecture—a collection of "Majestic Macroservices"—proves that agents operate best on stable, community-governed foundations. In a world where an AI can turn a GitHub issue into a verified Pull Request while you sleep, your value is defined not by the code you write, but by the instructions you give.

Comments

Sign in with Google or GitHub to comment.